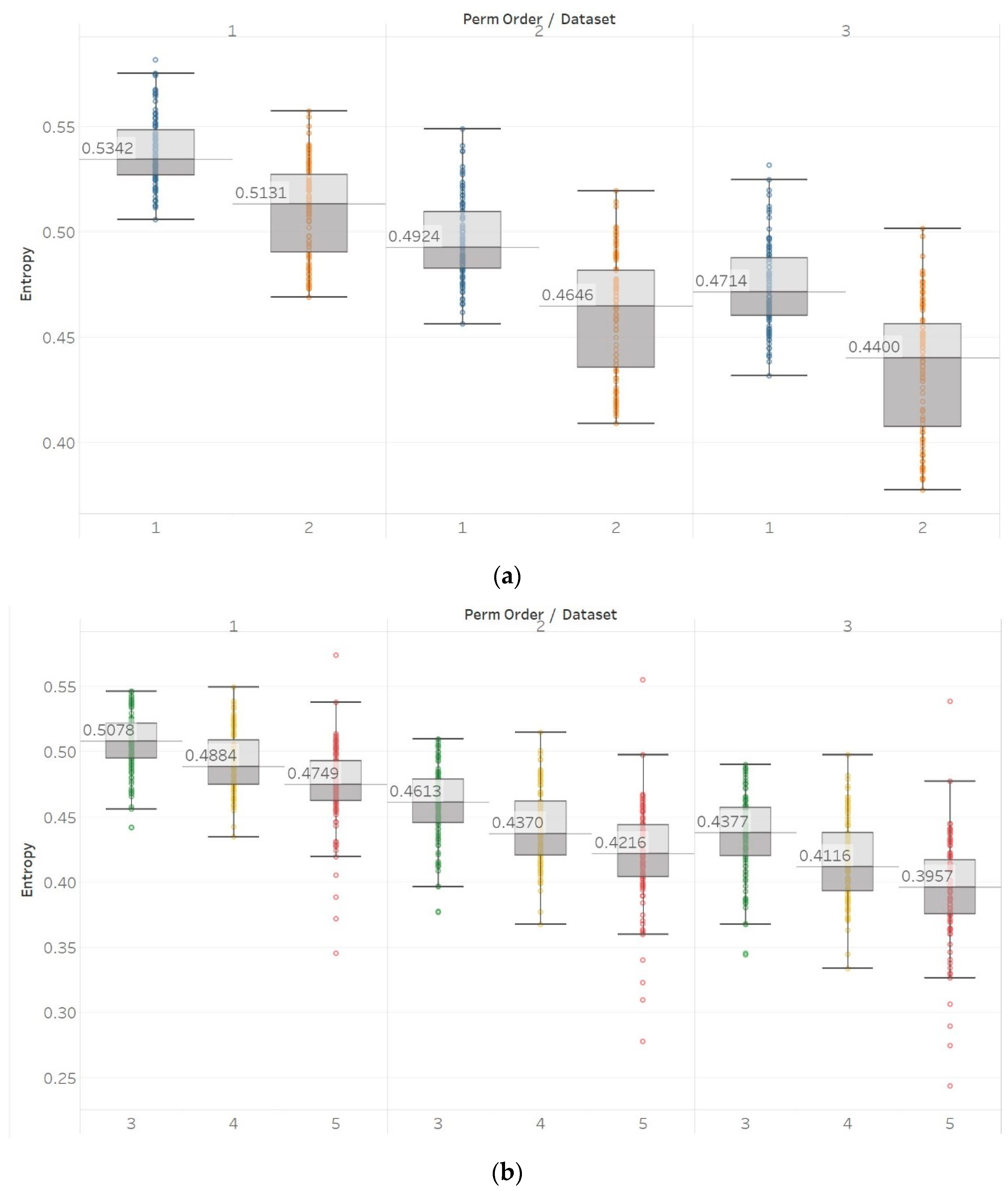

Now, when it occupies positions 3 and 4, we can actually distribute the other balls in two patterns (A, B, cluster, B, A) or (A, B, cluster, A, B).įinally, the cluster could be positioned at 4 and 5, or 5 and 6. First, the cluster could be at the far left. This one is slightly tricky because we need to account for a lot of cases, as you’ll see. The non-clustered balls could of course sit at the ends, but they could also sit at positions 1 and 4, or positions 3 and 6 (see below).įor each of these cases, the colors could again be distributed in 6 ways, and the same-colored balls rearranged in 8 ways, yielding two-cluster configurations. Let’s now consider the case of two clusters.

That means there are ways to rearrange each of the 6 configurations above, totaling possible three-cluster configurations. In the image above, the grey balls could be either red, blue, or green, then the white balls could represent either of the remaining two colors, while the black balls must represent the last color.įurthermore, each cluster could be formed in two ways- remember that balls of the same color are distinguishable. The first cluster could be any of the three colors, the second could be any of the remaining two, and the third must be the final color. That is, the two reds, two blues, and two greens are each together. There could be up to three clusters in a configuration. To find out, we just have to count up all the possible configurations and weight them accordingly. Supposing that I randomly order the balls in a straight line, I’d like to know exactly how many “clusters” of balls of the same color I can expect to get, on average. The balls might look something like this: Furthermore, let’s suppose we can distinguish between balls of the same color, perhaps because one of them has a decoration on it. It won’t be immediately clear why I’m doing this, and that’s ok, but consider a very simple system of six balls, two of each of the colors red, blue, and green. Let’s focus on this make-believe idea of clustering for a moment in hopes of gaining some insight into how entropy works. Now it’s time to do one of my favorite things-stretching an analogy to the point of nearly breaking, and then breaking it. Systems with a large amount of clustered, usable energy have very low entropy (think dry baking soda and vinegar in separate containers), but as a closed system evolves over time, that usable energy dissipates into useless, “unclustered” heat (think about the uniform mixture of baking soda and vinegar long after the “explosion”). I’m certainly not going to worry about defining it precisely-just consider the following qualitative description instead. Let me begin by creating out of nothing a very unscientific term called “clustering” of energy. Leno Pedrotti at the University of Dayton, who first introduced me to this idea. So what does it measure? I find it best to consider it not as a measure of disorder, but as a measure of uniformity in a system. Thank you to Dr. In fact, many textbooks use the term disorder explicitly to introduce the concept however, this description tends to lead to misunderstanding of the concept.Įntropy is a real, measurable quantity, just like volume, energy, or momentum. (Hint: That image on the right looks fairly orderly to me.)Įntropy is commonly referred to with the terms disorder, randomness, and chaos.

#Opposite of entropy free

Feel free to answer with your gut on this. They may seem like leading questions-they most certainly are.

Perhaps these seem like trick questions-they’re not. Which image below looks more disorderly? Which seems more chaotic?